User Interviews Types, Process, and How to Analyze Results

Learn how to run, analyze, and get more value from user interviews with the right tools.

You can stare at analytical dashboards all day and still not know what’s actually going on. Analytics will tell you “what happened”, but you’ll still need to figure out “why this happened”.

Why did users abandon onboarding halfway through? Why do they keep ignoring that feature you thought they’d love?

Here, user interviews are the most reliable way to understand behavioral sentiments. Running the best user interviews takes skill in knowing when to probe or pause and how to build rapport. All of these shape the insights during your research.

In this guide, you’ll see what UX interviews are, when to use them, and how to run interviews that actually lead to decisions.

What are user interviews?

A user interview is a one-on-one research conversation with someone who uses, buys, or is affected by your product. In UX research, the goal is to understand a person’s experience in enough detail to support product decisions.

The process is the same in most cases. You ask planned questions and use follow-up questions to get fuller answers. It’s also flexible across the product lifecycle: in discovery, before design work starts, during usability testing, and after launch.

Why user interviews matter

UX user interviews give you more detail than surface-level feedback would.

They help you:

- Understand the experience behind the action: You can learn what the user was trying to do and what influenced their choice.

- Catch pain points earlier: You’ll see issues before they become obvious in product metrics or support tickets.

- Hear the user’s actual language: The words people use can make later analysis clearer and help your team describe problems more accurately.

- Add context to other research methods: With the interview insights, you’ll understand certain patterns from surveys, analytics, or usability tests.

- Support key UX outputs: Good interview data can feed personas, journey maps, user-need statements, and feature decisions.

Types of user interviews

You can run user interviews in different ways, depending on what you need to learn and how deep you can go to gather those insights.

Here are the main types of UX research interviews you can conduct:

- Structured interviews: You ask each participant a prepared set of questions in a fixed order. Use this method to maintain consistency across interviews and compare answers more easily.

- Semi-structured interviews: You prepare key questions in advance, but you don’t stick to a script word-for-word. There’s still a guide you’ll follow, but you can also ask follow-up questions when you want to dig deeper into an insight.

- Unstructured interviews: Here, you go in with a topic, but there’s no fixed question list. The conversation is open and can take any direction. You can use this when you’re exploring a new area or want participants to lead with what matters most to them.

- Contextual interviews: You interview the participant in their usual environment while they’re using your product or service. It helps you see the workarounds and constraints that may never come up in a standard call.

- Remote interviews: You run the session over video or phone. Remote interviews are a good fit when your participants are spread across geographies or when you need immediate feedback. They are easier to schedule, though you may miss some environmental details.

- Group interviews: You have more than one participant in every session. You’ll hear shared views and points of disagreement. This setting needs moderation, as a few voices can dominate the discussion.

Semi-structured interviews are often the most useful starting point. They give you enough structure to stay focused and enough freedom to learn something you did not plan for.

How to conduct user Interviews step by step

Conducting a good user interview starts long before the call. Let’s look at all the steps in detail.

Step 1: Set a clear research goal

Without a specific goal, you'll collect a lot of conversation and not much you can use. If you cover broad goals like "learn about users," you’ll produce interviews that go in too many directions at once.

Have more focused goals. For example, "Why do users drop off before completing their first setup?" or "What makes someone decide to upgrade their plan?"

When you’re that specific, your data becomes easier to work with.

Whenever possible, get input from product stakeholders when writing your goal. They often know which questions could expose frictions and reveal what’s not working. So, your research can be actionable and feed directly into real decisions.

Step 2: Find and recruit the right participants

Once your goal is set, you need people who can actually speak to it. Talking to the wrong participants gives you data that doesn't apply.

You can work with five to ten participants per study. To make sure you're selecting the right people, send a short screener survey before confirming anyone.

A screener doesn't need to be long. A few questions about their role, product usage, or experience level can be enough to filter out people who aren't a good match.

Step 3: Write your discussion guide

With a discussion guide, you can keep the interview on track. You can start with an introduction about who you are and the objective of the session. Participants are more comfortable when they know what to expect.

Keep your UX research questions open-ended and focused on behavior. For example, "Walk me through the last time you did X" gets you more useful detail than "Do you think X is easy to use?"

Add follow-up questions to your guide. When a participant says something useful, ask "Can you say more about that?" or "What happened next?" Such questions take you further than simply moving on to your next prepared question.

Step 4: Run a pilot session first

To do this, you can invite a colleague to play the role of participant. Or recruit someone who may not fit your exact target profile but is close.

A pilot helps you identify confusing questions, overly long sections, and awkward transitions. It’s much easier to fix these before you’ve burned through your actual participant list.

Step 5: Conduct the interview

Start with easy, open questions before you get into the specific topic. It takes most people a few minutes to settle into a conversation.

Ask one question at a time. When a participant finishes speaking, pause before you respond. It prompts them to add something they wouldn't have said otherwise.

Take notes during the session, but don't try to capture everything word-for-word. Look for any surprises or direct quotes worth keeping.

Step 6: Review the interview while it is still fresh

Do not wait too long after the session. Do a quick debrief while the interview is still fresh.

It’s easier if you're recording the session with the participant's consent. You get to keep your notes light and go back for details later.

You can use tools that auto-transcribe sessions and let you tag moments in real time. This way, you don’t lose anything important in a long recording.

How to analyze user interviews

Raw notes and recordings don't tell you much on their own. You need to work through the data to see the patterns.

Here's how you do it.

Centralize all your data.

Collect all your notes and recordings in one place before you start. Transcribe the interview recordings, if any, with automated transcription tools.

Once everything is in one place, read through your notes carefully. At this point, you're only getting familiar with the material, so you can start making connections.

Tag and organize by theme.

A tag is just a label that tells you which topic a note belongs to. For instance, if you interviewed users about an onboarding flow, you can create a tag for drop-off reasons. And you can tag responses related to drop-offs here.

You don't need to finalize your tags before you start. Add new ones as you go, and merge or split them when the data calls for it. You can do this in a spreadsheet or in UX research tools that support automated tagging.

Look for patterns across participants.

Now, filter down to one theme at a time and look at what multiple participants said about it.

You're looking for responses that cluster together, contradict each other, and anything that showed up more than once. This process is called affinity mapping.

You can also segment responses by user type or experience level. That’s because some issues only show up in certain groups. It’s important to see those differences across every participant.

Turn findings into something usable.

When you can describe your insights in a way your team can act on, your analysis is ready. It could be a list of pain points, a user journey, or a set of need statements.

Before you move on, make sure you store the insights somewhere your team can search and reference later. It can be an insights library or a repository. That way, going back to a specific interview six months from now should only take minutes.

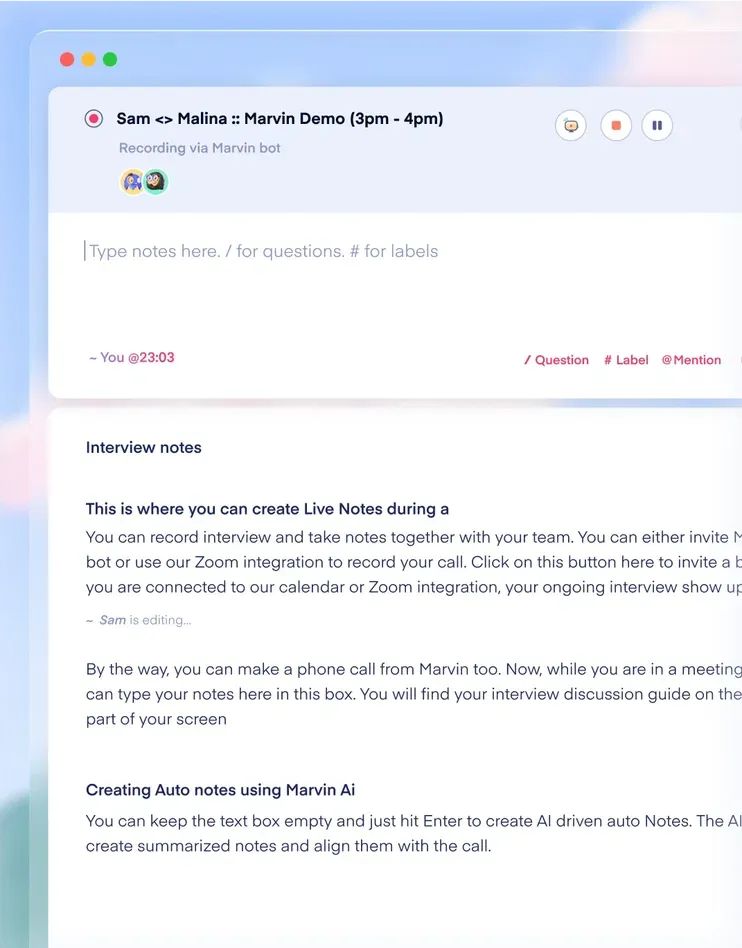

What HeyMarvin does in UX interviews

Now, how much of that work can you speed up without losing your research quality? HeyMarvin covers all of it in the following ways:

- Conduct and record user interviews: Record each session and keep it organized, along with the rest of your research.

- AI note-taking and transcription: Attach your own notes to time-stamped transcripts, or have AI generate notes automatically. The conversation and notes remain searchable even after the session is over.

- AI-moderated interviews: Use your own discussion guide and question logic to run studies at a larger scale or in a shorter timeframe.

- AI thematic analysis and affinity mapping: Review recurring topics and sort responses with label suggestions to see patterns easily.

- Searchable research repository: Keep interviews, usability tests, notes, CSAT, NPS, and research files together. The tools come with multilingual support and include PII redaction.

If your team handles regular UX interviews, all these capabilities mean less time spent piecing research together after the session ends. Download our 2025 State of Research Repositories Report to see how leading teams structure their research programs.

Frequently asked questions (FAQs)

Let’s look at a few common questions you’ll have regarding UX interviews:

How many user interviews should you do?

For UX interviews, there’s no fixed number. The exact number depends on your audience mix and the level of variation across responses. When you notice participants repeating the same observations, you've likely collected enough for that research goal.

But if you're running AI-moderated sessions, you can scale participation and bring down scheduling costs.

What is an AI-moderated UX interview?

An AI-moderated UX interview is a session where software asks the questions, handles follow-ups within a set guide, and captures the response data for you.

AI interviews are useful for gathering structured feedback at scale. This also includes product feedback and multilingual interviews. But they are not a replacement for deeper human-led, semi-structured interviews.

Such a research stack gives you time-stamped transcripts, searchable records, and guide-based question logic. The review also speeds up significantly after the session ends.

What are good UX interview questions?

Good UX interview questions help people tell you what happened. They are mostly open-ended and have follow-up prompts to get details and clarification.

Questions tied to a specific event usually work better than abstract opinion questions. For example, “Tell me about the last time you tried to do X” will often give you more usable detail than “How do you usually do X?” Good questions also stay neutral, so you are not pushing the participant toward a preferred answer.

What is the difference between UX interviews and surveys?

UX interviews help you gain depth of insight. Surveys are better when you need larger reach. Surveys are faster and cheaper, but they do not fit every research goal.

To put it simply:

- If you want to know how many users experience a problem, run a survey.

- If you want to deeply understand the problem, run interviews.

Conclusion

User interviews are an efficient way to understand the reasons behind user behavior and its outcomes. When you set a clear goal, ask open questions, and analyze interviews carefully, you end up with findings your team can actually use.

Managing all of that across multiple sessions takes time. With HeyMarvin, you can expedite the process. It records and transcribes sessions automatically, runs AI-moderated interviews at scale, tags themes across transcripts, and keeps everything in a searchable repository your whole team can use.

If your team wants a cleaner way to run UX interviews and keep the findings usable even after the study ends, book a demo with HeyMarvin.

See Marvin AI in action

Want to spend less time on logistics and more on strategy? Book a free, personalized demo now!

.svg)