Types of user experience testing methods and how to use them

Master UX testing with essential methods and advanced techniques to enhance user experience design.

Every great product has a story, and UX testing helps you write its chapters. Without it, you risk rolling out features that frustrate users, costing you time, money, and trust.

In this guide, we review the most effective UX testing methods, the potential obstacles, and how to overcome them. After all, gathering data is just the start; the real challenge is turning it into meaningful insights.

But with our AI-powered research assistant, this process can take mere hours instead of days. Create a free account with HeyMarvin to centralize all your qualitative research and analyze it with speed and accuracy.

TL;DR - User experience testing methods

UX testing methods fall into different categories depending on how you run your tests (moderated, unmoderated, remote, in-person) and what techniques you use:

- A/B testing

- Card sorting

- Tree testing

- Surveys and questionnaires

- Usability testing

- Eye-tracking studies

- Diary studies

- Click testing

Each method explores a specific layer of the user experience, from behavior to perception. And you can choose to explore what/how often users do something (quantitative testing), or why they do it (qualitative testing).

Ultimately, you’ll combine multiple user experience testing methods, depending on your goals.

What is UX testing?

UX testing is a research method that helps you understand how users interact with your product. It uncovers potential issues, pain points, or discontent and reflects whether your design:

- Works as intended

or

- Confuses users

You test by observing real people as they try to complete tasks in your app, website, or system. While looking for flaws, you also try to validate what works. The whole point of UX testing is to help you make your product more:

- Intuitive

- Efficient

- Enjoyable to use

For example, you’re designing a checkout process for an e-commerce app.

During UX testing, participants hesitate to buy because they can’t figure out how to apply a discount code. Others abandon the process because the payment page feels cluttered.

These insights suggest that the discount code field needs better placement/labeling. And the payment page needs a cleaner design.

UX testing helps you smooth out the bumps and catch these hiccups before they frustrate customers and hurt sales.

Why is UX testing important?

By providing insights that improve your product at every stage, UX testing takes your design from good to great. It also spares you the expensive mistakes along the way.

Here’s why it’s a must for your design process:

- Validates your ideas: UX testing shows what works and what doesn’t, giving you confidence in your design choices.

- Uncovers hidden problems: Users may interact with your product in ways you don’t expect. That’s your chance to spot gaps and confusion you’d miss otherwise.

- Saves time and money: It’s much cheaper to fix a design flaw while it’s still on the whiteboard than after it’s live.

- Boosts user satisfaction: A smooth, intuitive experience keeps users happy, loyal, and excited to use your product again.

- Builds trust in your brand: When your product feels intuitive, users trust it and are more likely to recommend it.

User experience vs. usability testing key differences

While many teams use “user experience testing” and “usability testing” interchangeably, these two terms have distinct scopes.

UX testing evaluates the entire user experience: How do users perceive your product? What do they feel about it? How do they interact with it?

Usability testing is just one method in this broad evaluation process, which focuses on how easily users can complete specific tasks.

Once you understand the connection between the two, you’ll also grasp the key differences we outlined below:

In practice, researchers start with usability testing to identify friction in specific flows. Then, they explore other methods to understand the reasons behind the issues that usability testing revealed.

Types of user experience testing methods

Products are complex, but users’ interactions with them can be even more so. That’s why each UX testing method explores a different layer of the experience.

We break them down below so you can understand their strengths and choose the right one for your product challenges.

Modes of Testing

There are four overarching ways you can conduct user experience tests:

- Moderated testing: You, as the researcher, guide users through tasks. You observe, ask questions, and collect real-time feedback. This approach is excellent for complex workflows where user actions need clarification.

- Example: Testing how developers onboard to an API setup tool.

- Unmoderated testing: Users complete tasks independently, often using automated tools. It’s faster and scalable but doesn’t allow you to probe deeper in the moment.

- Example: Testing if users can locate a new navigation menu item.

- Remote testing: This happens online, often through screen sharing or testing platforms. It's ideal for distributed user bases or when logistics make in-person testing difficult.

- Example: Observing a global user group interact with a SaaS dashboard.

- In-person testing: You and the participants are in the same location. You get to notice subtle reactions, like body language or hesitation.

- Example: Testing a new point-of-sale system in a retail store.

Rather than wondering which method to choose, consider combining them based on your goals. For example, usability testing can be:

- Moderated and remote: You guide participants through a task via Zoom.

- Unmoderated and remote: Users perform tasks independently, with their screens recorded.

- Moderated and in-person: You sit with a user while they interact with your prototype.

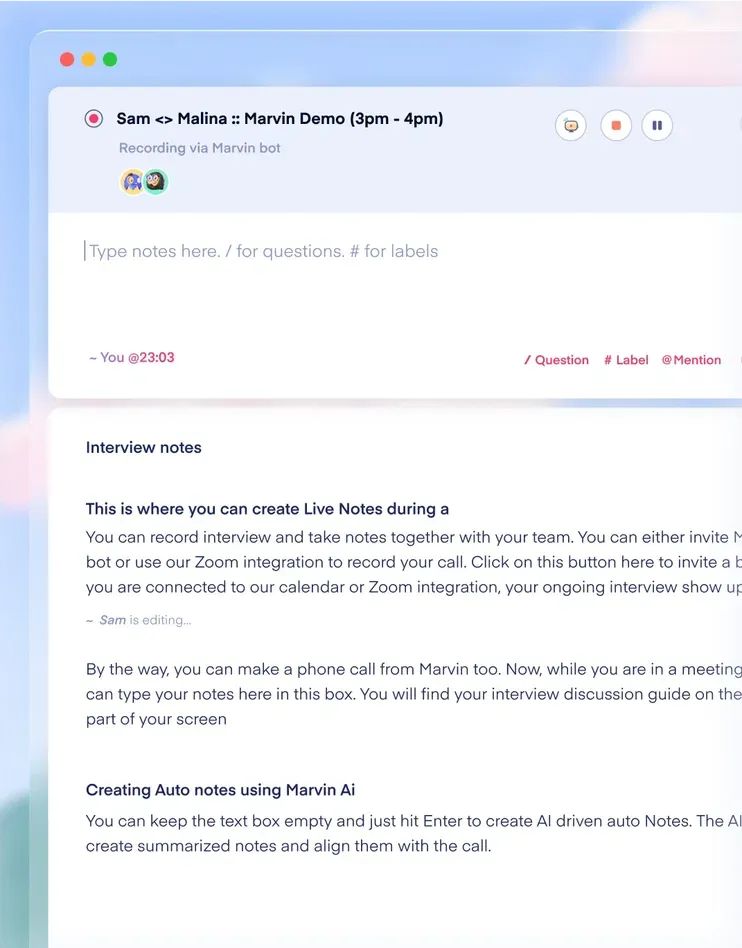

As challenging as UX testing may seem, the hard work doesn’t stop at data collection. After using these UX testing methods, it’s time to centralize and analyze your test results. You want to uncover actionable insights fast. That’s where HeyMarvin steps in.

Our UX research repository and AI-powered assistant can easily streamline your post-research workflow.

Create a free account with HeyMarvin today to see how it can analyze your UX testing research and distill it into relevant visual reports.

Techniques Within Each Mode

The following are specific methods you can adapt to any UX testing mode — moderated, unmoderated, remote, or in-person:

A/B Testing

With this data-driven method, you present two versions of a design (A and B) to users and compare their performance.

You can run it in live environments, on a website or app, by splitting traffic between versions to measure real-world behavior. For instance, test whether a red or green sign-up button drives more clicks.

You can also conduct A/B testing asynchronously in controlled settings. To this end, you can send participants through pre-recorded workflows or prototypes.

While it’s excellent for measuring outcomes (e.g., clicks or conversions), A/B testing does not explain why users prefer one version. Pair it with qualitative methods, such as usability testing or interviews to get the full picture.

Card Sorting

Here, users organize and label items into groups that make sense to them.

Card sorting helps you understand mental models, which improves navigation design.

Imagine testing a settings page for a SaaS platform. Users might group "Notifications" under "Account Preferences" instead of "Alerts." Their choices guide your design structure to match their expectations.

Tree Testing

Tree testing strips out visuals to test navigation clarity.

You give users a scenario — for example, to find the pricing details — and they must navigate a plain text hierarchy.

This method helps you validate that your site’s structure supports intuitive user paths before committing to a design.

Surveys and Questionnaires

These gather user sentiment and preferences on a broad scale. For example, after releasing a new feature, you can survey users about ease of use and satisfaction.

Surveys are great for spotting trends but rely on users accurately reporting their experiences. Pair them with behavioral methods for balanced insights.

Eye-tracking Studies

This high-tech method maps user attention, showing what they first notice or miss entirely.

Is your landing page not converting? Eye-tracking can reveal whether secondary elements like images distract users from the primary CTA.

These studies are particularly useful for refining visual hierarchy.

Diary Studies

Users document their interactions with your product, capturing moments you can’t observe live.

For example, you’re launching a new developer tool. A diary study could reveal friction points during extended use, such as integration or feature discovery challenges.

Diary studies are great for uncovering long-term user needs.

Click Testing

This simple method lets you test where users would click on a static interface. It helps validate design assumptions, such as whether users recognize a button as clickable.

You could use it to test if your "Start Free Trial" button looks actionable on a new landing page.

How to choose the right UX testing method

You’ve seen you can choose from several UX usability testing methods, each one with its strengths and trade-offs. The one(s) you select should align with your research goals, users' needs, and, of course, your resources.

Here's a deeper dive to help you make smart choices:

1. Start with the problem you're solving

Every testing method answers different questions.

Some focus on usability — how easily users complete tasks — while others uncover motivations, preferences, or mental models.

Knowing the type of insight you need helps you avoid wasted effort and make your testing purposeful.

If users drop off on a mobile app, use session recording or usability testing to pinpoint the issue. For new feature ideas, exploratory research like contextual inquiry helps you understand user needs in real-world settings.

2. Match methods to data types

Decide whether you need qualitative customer insights (user emotions, behaviors, etc.) or quantitative research (task completion rates).

You could get qualitative data on user behaviors from remote and in-person usability tests. And by collecting surveys, you’ll get satisfaction scores, which are quantitative insights.

3. Adapt to your product’s complexity

A simple landing page test might only need A/B testing.

In contrast, moderated usability testing would be more beneficial for intricate workflows, such as a multi-step API integration tool. It allows you to observe and ask follow-up questions.

4. Weigh user accessibility and location

Remote testing is your go-to if users are scattered across the globe. Unmoderated remote usability or online tree testing lets you gather insights without booking a single flight.

Conversely, in-person methods shine when your product needs hands-on interaction. You just can’t test a point-of-sale system over Zoom.

5. Prioritize based on risk areas

High-stakes features need deeper testing.

For instance, usability testing and heuristic evaluation can uncover critical pain points when launching a payment system.

For less vital changes, lightweight methods such as click testing may be enough.

6. Consider scalability

Some methods (A/B testing, in particular) work well for live products with many users. Others (diary studies, for instance) are better suited for niche or long-term insights, requiring fewer participants.

Consider if you need to keep adding participants to your tests while choosing your testing method.

How to use AI for UX testing methods

Whether you opt for a single method or plan a study with multiple UX testing methods, the process can be complex. From planning and data collection to analysis and final decision-making, it all takes time and effort.

AI can cover much of this process and support the different stages of the workflow, without replacing researchers.

Below, we’ll look at how AI helps you move faster, spot patterns earlier, and reduce manual effort. All while keeping you in full control of interpretation, validation, and final decisions.

Most AI tools that facilitate UX testing typically focus on specific parts of the process. Ideally, you’ll want to pick one that can cover UX testing at multiple stages, such as HeyMarvin. Our AI-native customer insights platform will spare you from switching between tools, losing context, or duplicating effort. Instead, it will connect your UX testing data from multiple sources and help you automate the initial analysis.

Create a free HeyMarvin account today and see how easy it is to get your first insights from UX testing in hours instead of days. Free up your team to spend their valuable time on reviewing and validating the AI results instead of manually analyzing data line by line.

Challenges in UX testing

UX testing is powerful, but it comes with obstacles that can slow you down or alter your results.

Here’s what to watch for and how to address it to plan smarter and get better insights:

- Finding the right participants: Recruiting users who match your target audience can be tough. If your product is niche (a developer tool, for instance), it’s even harder. Try tapping into professional communities or using targeted recruitment services.

- Avoiding bias in feedback: Users can often say what they think you want to hear. Neutral prompts, like "Tell me about your thought process here," encourage honesty and reduce bias.

- Dealing with time constraints: UX testing takes time, from planning to analysis. Deadlines can pressure you to skip steps. Streamline your process by focusing on key user flows instead of testing everything at once.

- Interpreting qualitative data: User feedback can be messy and subjective. You might hear conflicting opinions about the same feature. To prioritize what matters most, categorize findings by frequency and severity.

- Remote testing technical limitations: Remote setups can fail due to bad internet or user unfamiliarity with testing tools. Support your sessions with clear instructions and have a backup plan, like phone interviews, if tech fails you.

- Balancing team expectations: Stakeholders may push for quick wins if they don’t fully understand testing outcomes. Present findings with clear visuals and UX research reports to highlight issues and their impact.

- Ensuring usability across devices: Users switch between desktops, tablets, and phones. Testing on multiple devices and screen sizes adds complexity but helps you cover all possible scenarios.

Frequently asked questions (FAQs)

Ready to dive into UX testing? Check out these FAQs first:

How often should UX testing be conducted?

Conduct UX testing regularly throughout your design process. You want to test early to validate concepts and later to refine features.

After launch, employ different UX design testing methods with every major update or whenever the user needs change.

How do you measure the ROI of UX testing?

Measure ROI by tracking improvements in key metrics — conversion, task success, and user satisfaction. Compare these before and after testing to see the impact.

What skills are needed to conduct UX testing?

Conducting UX testing requires a mix of soft skills and technical know-how:

- Observation skills: Notice user behaviors, frustrations, and subtle cues (hesitation or confusion).

- Communication skills: Ask unbiased questions, interpret feedback, and keep users comfortable during sessions.

- Analytical skills: Spot patterns in data, prioritize findings, and turn insights into actionable steps.

- Technical proficiency: Know how to run tests smoothly and effectively using testing tools and methods.

What is the difference between qualitative and quantitative UX testing methodologies?

The main differences between qualitative and quantitative UX testing methodologies come down to goals.

Qualitative methods are flexible and exploratory, helping you understand why users behave the way they do. In contrast, quantitative methods are structured and standardized, helping you measure what happens and how often at scale.

What is the most commonly used UX testing methodology?

Usability testing is by far the most common UX testing methodology. Useful across different research goals, it is practical and versatile because it allows you to:

- See exactly where users struggle

- Ask them why they struggle

- Observe how many of them struggle and how often

Qualitative usability testing uses moderated sessions to understand behavior and reasoning. Quantitative testing uses unmoderated sessions to measure success rates or time-on-task.

Conclusion

The UX testing methodologies discussed above are the most reliable way to understand:

- How users truly experience your product

- What you can improve

It’s not about perfection as much as it is about progress. Every new insight sharpens your design and reduces risks, bringing you closer to creating something users love.

The real magic happens after testing, though. And that’s when HeyMarvin steps in.

Our AI-powered research assistant helps you uncover patterns, trends, and insights hidden in your user testing data. HeyMarvin turns raw feedback into impactful decisions by streamlining the qualitative data analysis and centralizing everything.

Create your free account today and see what it’s like to take the guesswork out of UX testing with automated analysis.

See Marvin AI in action

Want to spend less time on logistics and more on strategy? Book a free, personalized demo now!

.svg)