How to Collect Customer Feedback: Methods & Best Practices

A step-by-step guide to collecting customer feedback, covering the best methods, tools, common mistakes, and practices for actionable insights.

While there are plenty of ways to collect customer feedback, the real value lies in the method you choose. You need ways to capture context and surface problems before they turn into churn.

We’ve heard it firsthand from our customer that, during their beta stage (SaaS-based), they added a survey snippet that asked users for feedback to refine the product and meet the requirements. This worked, and they have seen some UX issues they otherwise couldn't spot, really.

Selecting the methods requires an in-depth understanding of the scope. Let’s look at how to collect customer feedback in ways that give teams more than a score to stare at.

TL;DR - How to collect customer feedback

- Surveys: For measuring satisfaction, tracking trends, and comparing responses over time

- Public reviews: For spotting recurring complaints, expectation gaps, and reputation issues

- In-product and on-page micro-feedback: For collecting reactions tied to a specific screen, action, or feature

- Support tickets and chatbots: For capturing pain points, urgent issues, and service-related feedback

- Social media monitoring: For picking up unsolicited feedback, sentiment shifts, and fast-moving complaints

- Brand communities: For tracking recurring questions, product issues, and feature demand from engaged users

- Interviews and usability testing: For context, deeper explanation, or direct observation of friction

What is customer feedback and why most teams get it wrong

Customer feedback is first-hand evidence about how people experience your product or service. It includes what customers say in surveys, interviews, reviews, and support conversations, and what they do in usability tests and product interactions.

Your teams may get it wrong when they:

- Gather customer feedback without a research goal.

- Mix together feedback sources such as support complaints, feature requests, NPS responses, and interview quotes without structuring them.

- Ask weak or leading questions and get vague answers in return.

- Collect feedback across tools without tagging or centralizing it.

- Mistake volume for insight. More comments do not mean more clarity.

- Fail to consider the feedback while making decisions.

The problem is not volume, because most teams already collect enough feedback. It’s that they collect it without a system in place.

Top methods for collecting customer feedback

There is no single best way to collect customer feedback. Some methods help you measure patterns across a large group, and others help you understand why customers feel a certain way or where they run into friction. Here, method selection is important.

Let’s look at each method in detail:

Surveys

Use surveys when you need structured answers that you can compare across users or over time. They help teams measure satisfaction, track changes over time, and compare responses across segments.

Keep them short. Tie the questions to a specific moment, such as after onboarding, after a support interaction, or after a purchase. They work because the experience is still fresh.

McDonald’s customer satisfaction survey is a good example. It uses receipt-based post-purchase surveys through McDVoice. Here, customers enter a survey code from their receipt and rate their recent store experience.

Public reviews

When you want to build trust with ICPs, public reviews help, given their wide visibility. Also, while they are not great for nuanced diagnosis, they are excellent for spotting recurring complaints and expectation gaps.

The data can also help teams compare perceived strengths and weaknesses across competitors.

For example, Airbnb uses post-stay reviews to collect structured customer feedback on specific aspects of the experience.

In-product and on-page micro feedback

This is the fast lane. Thumbs up, thumbs down, a one-question prompt, a “Was this helpful?” widget. These methods work when you want to tie feedback to a specific screen, answer, or action.

OpenAI uses this pattern directly in ChatGPT and related experiences, where users can tap thumbs down to report a bad response or use thumbs up/down to shape what they see.

Support tickets and chatbots

Support tickets and chatbot conversations are useful customer feedback sources because they capture pain points, failure patterns, documentation gaps, and service quality issues. Unlike surveys, this feedback connects a problem with real urgency.

IKEA is a solid real-world example. It collects feedback at the end of chatbot conversations by asking users to rate their chat experience and share written comments before submitting the survey.

Social media monitoring

Social media is where you pick up unsolicited feedback that customers would never put into a survey. It is useful for sentiment, recurring complaints, campaign response, and fast-moving issues.

Brands like Amazon collect customer feedback through social media by monitoring posts and replying publicly to complaints. Then they move the conversation to direct messages for account-specific help.

Brand communities

Brand communities give companies ongoing feedback from highly engaged customers. It’s different from social media listening or public reviews because the feedback is from their loyal followers, what they ask for, debate, and which ideas gain traction over time.

Apple gathers such customer feedback through its Support Community. Here, users ask questions, report product issues, and discuss recurring problems in public threads that other customers and Apple specialists can review.

How to choose the best way to collect customer feedback

Choosing the right method depends on your needs. A survey, interview, review, or support log can all be useful, but not for the same job.

Use these steps to choose the right one:

- Define the decision first: Are you measuring satisfaction, diagnosing friction, testing a new concept, or looking for new feature requests? If you need to measure sentiment across a broad group, use structured methods like CSAT, CES, or NPS. If you need to understand why something is happening, use interviews or open-text feedback instead.

- Match the method to your audience: Think about who is responding and what format fits them best. For example, active users may respond to an in-product prompt. B2B buyers or enterprise customers may give better feedback through email surveys or scheduled interviews.

- Collect feedback at the right touchpoint: . Pick an appropriate time to ask for feedback. Post-support surveys work best after a resolution. In-product prompts work during use. Exit surveys work near abandonment. Timing affects response quality more than most teams expect.

- Decide what kind of data you need: Use quantitative methods when you need pattern tracking, trend lines, or comparison across segments. Use qualitative analysis when you need an explanation, context, or language that customers use to describe the problem.

Where HeyMarvin fits in the customer feedback process

Once you collect customer feedback across all methods, you’ll know that real friction lies in structuring it into useful insights.

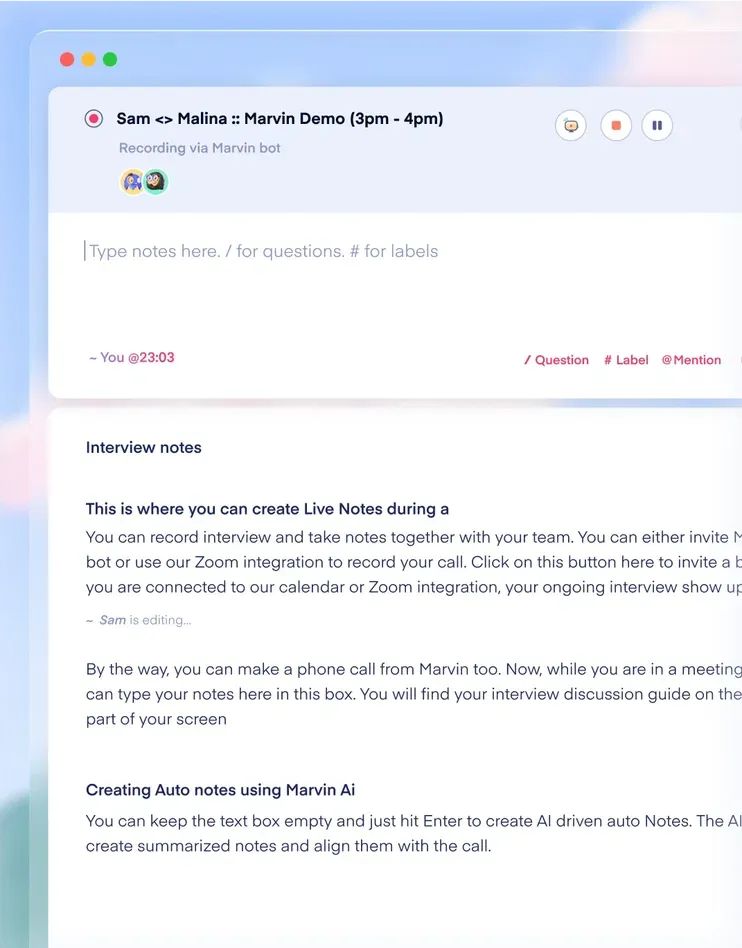

HeyMarvin is a research repository and analysis platform built for that stage. It helps teams work with feedback after it starts piling up. You bring interviews, surveys, support conversations, and other research inputs into one place. From there, your teams can transcribe, tag, cluster themes, search across projects, and track insights without needing five other tools.

The platform is useful when your team needs to:

- Centralize feedback across methods

- Use automated tagging and notes

- Find patterns across projects

- Make insights easier to revisit and share

Pantheon has experienced it firsthand. Before HeyMarvin, its two-person research team worked across Google Sheets, Miro, Zoom, Drive, and Slides to analyze customer calls and report patterns. With HeyMarvin, the workflow got faster and easier to reuse.

As Cathi Bosco, Senior UX Researcher, put it, “We needed a tool like HeyMarvin so that research was more self-serve and discoverable across the organization. And it really freed us up as a powerhouse of two to be more strategic.”

If your team wants to see how that workflow looks in practice, set up a free demo with us today

Best practices for customer feedback collection

Good feedback fails in small, boring ways.

Not by lack of effort but usually by bad question design, biased sampling, weak tagging, and notes that you cannot trace back to the original source.

But you can avoid it with this checklist:

- Ask one question at a time: Do not ask double-barreled and leading questions. For example, “How easy and useful was the onboarding?” That’s two questions pretending to be one. It’s better to avoid this, as they distort responses and make the data harder to interpret.

It’s also important to use plain wording. If customers have to decode the question, the quality of the answer drops.

- Tag feedback with enough context: A comment without metadata is just a comment. Add product area, journey stage, segment, issue type, and date. Then, if a team wants to understand why trial users are dropping off during onboarding, they can filter for that feedback directly, without digging through raw notes again.

- Centralize all feedback: If customer feedback is spread across multiple locations, it can take time to identify patterns. Centralizing feedback makes it easier to compare themes, revisit fringe requests, and share findings across teams.

And that’s also where a research repository like HeyMarvin can help by keeping tagging, search, and synthesis in one place.

- Set expectations with customers: Tell customers how you’ll use the feedback. Be clear that not every request will lead to a product change. That small bit of honesty saves a lot of frustration later and makes follow-up easier.

- Automate feedback collection when timing matters: Automate feedback requests after key moments like support resolution, onboarding, or churn. With this, you can keep the collection consistent with less manual follow-up and also help teams capture fresher, more comparable input.

- Review feedback regularly: You shouldn’t review it only when something goes wrong. Regular analysis will help your teams spot patterns early, track whether fixes are working, and tell one-off complaints and repeat issues apart.

Frequently asked questions (FAQs)

Here are a few common questions you might have during your customer feedback collection.

What is the difference between customer feedback and customer insights?

Customer feedback is the raw input customers give you. Examples are survey responses, reviews, customer interview quotes, and in-product comments. Customer insights are what you get after analyzing that input, connecting the dots, and translating it into something a team can act on.

Put simply, feedback is the signal. Insight is the interpretation that explains what matters and why.

What is the right cadence for collecting customer feedback?

There is no universal cadence. You can run customer feedback on two tracks: collect continuously at key touchpoints, then review it on a regular schedule.

For example, use this to get started:

What sample size is needed for customer feedback research?

It depends on the method. For surveys, the sample size depends on your audience size and the level of confidence you need in the result.

For qualitative methods, the range is usually smaller. For example:

- In-depth interviews can have 5-30 participants, with around 12 enough for most studies

- Focus groups can usually have 4-6 groups with 5-10 participants per group

- Usability testing can have 5-8 users per round

But sample size is not one-size-fits-all. It changes based on how precise you need the result to be and how much variation exists in the responses.

Conclusion

Good customer feedback depends on choosing the right way to collect it. Surveys help measure patterns, whereas interviews and usability tests add context. And methods like reviews, support conversations, social media, and brand communities help surface the recurring issues that structured questions often miss.

Once that feedback starts coming in from multiple places, the next challenge is keeping it usable. Teams need to organize it, preserve the original context, and make findings easier to revisit and share. HeyMarvin supports that part of the process by:

- Surfacing cited answers from a shared repository

- Sending insights out through digests and topic subscriptions

- Packaging all the findings into reports, video clips, playlists, or AI-generated presentations

- Supporting AI-moderated interviews in 40+ languages

If your team already has enough feedback but not enough clarity, book a demo to see how HeyMarvin fits your workflow.

See Marvin AI in action

Want to spend less time on logistics and more on strategy? Book a free, personalized demo now!

.svg)