AI Customer Feedback: How It Works & How to Use It

Explore how AI enhances customer feedback analysis, from tool selection to overcoming key challenges.

Surveys, support tickets, interviews, app reviews, and dashboards are filled with customer feedback.

But collecting data isn’t the same as understanding it. When insights are spread between tools and datasets, teams struggle to see the patterns that should guide product decisions.

In this article, we’ll show you how to use AI to make the most of customer feedback. There’s a method to answering more questions with greater confidence and stronger evidence.

Curious how that works in real life? Book a demo with HeyMarvin and see how an AI-native customer insights platform can help you upgrade your decision-making.

What is AI customer feedback?

AI customer feedback is an approach that uses machine learning and natural language processing to read, sort, and structure what users say.

Your customers can share feedback through any channel. But with the use of AI, you no longer have to manually sort through hundreds or thousands of comments. Instead, you review the analysis to get to insights faster.

While AI customer feedback software won’t “understand” text like a human researcher, it will show you the patterns. It’s very good at scale and speed, handing you a map and helping you decide how to act.

How does AI analyze customer feedback?

When it comes to customer feedback, AI combines text parsing, intent detection, theme/sentiment analysis, and clustering:

- First, the AI breaks the text into tokens: It detects phrases, sentences, individual words, and their meaning. And it can recognize entities such as feature names, buttons, screens, product versions, competitor names, etc.

- “The onboarding is confusing, and the buttons feel hidden,” should be broken down into: onboarding, confusing, buttons, hidden.

- Then, it starts to detect intent: It identifies what the user wants to say. Are they reporting a bug, asking for a feature, expressing frustration, or comparing products?

- “Can’t find the export option” should be signaled as a discoverability issue in the workflow.

- Next, it runs sentiment and emotion analysis: It could mark feedback as frustrated, confused, delighted, skeptical, or angry, and quantify it in percentages.

- “40% of the users felt overwhelmed while using this feature” should give you a design signal.

- Finally, it clusters themes: The AI spots the patterns behind different words that imply the same things and groups similar feedback.

- “Hard to navigate,” “too many clicks,” “can’t find the settings,” and “feels messy” should fall under a single theme: navigation friction.

- Over time, it also detects trends: AI can analyze large datasets and identify correlations among them. By comparing feedback from months ago with the latest sources, it can easily spot trends.

- “Previous feedback showed 31% onboarding issues before the redesign and 12% afterward,” confirms real improvements.

Where AI adds most value while analyzing customer feedback

AI is most valuable when analyzing feedback that is too large, messy, or fragmented for manual analysis.

Consider using it whenever:

- Volume explodes (thousands of comments within days or weeks)

- Feedback is unstructured (mixed issues in one paragraph; long, emotional rants)

- Speed will impact outcomes (onboarding confusion spikes)

- Feedback is siloed (tickets from Support, interviews from Product, surveys from Marketing, etc.)

- You need to see even the weakest signals fast (before churn spikes)

- You have to back up decisions with evidence (“18% of negative feedback in the last 30 days mentions export failure on mobile.”)

Take this piece of feedback for instance: “Love the idea, but exporting is broken on mobile, and I don’t get why I need to sign in twice.”

This single comment contains three distinct signals: praise for the concept, a mobile bug, and a UX issue in the authentication flow.

Imagine receiving hundreds or thousands of such comments. AI-powered customer feedback analysis will instantly improve your visibility over data. It breaks down each comment to its essence and extracts patterns backed by solid numbers and user quotes.

How to implement AI analysis for customer feedback

AI is a layer you can add to survey tools, support systems, research repositories, analytics, etc. That’s why there’s no single way to implement it in customer feedback analysis. But there are two directions you can choose from, depending on how many resources you want to invest.

Option 1: Build your own AI stack

This will suit you if you need highly custom workflows and want full infrastructure control. It requires significant ongoing work and dev resources. But if you’re willing to juggle multiple tools, you can assemble your stack piece by piece:

- Use an LLM API to analyze open text

- Add a sentiment model

- Build clustering logic

- Store results in your database

- Visualize themes in a dashboard

You’ll be running the entire AI system, making sure it all fits and works smoothly together. For some teams, this level of ownership makes sense, whereas for many, it becomes a second product to maintain.

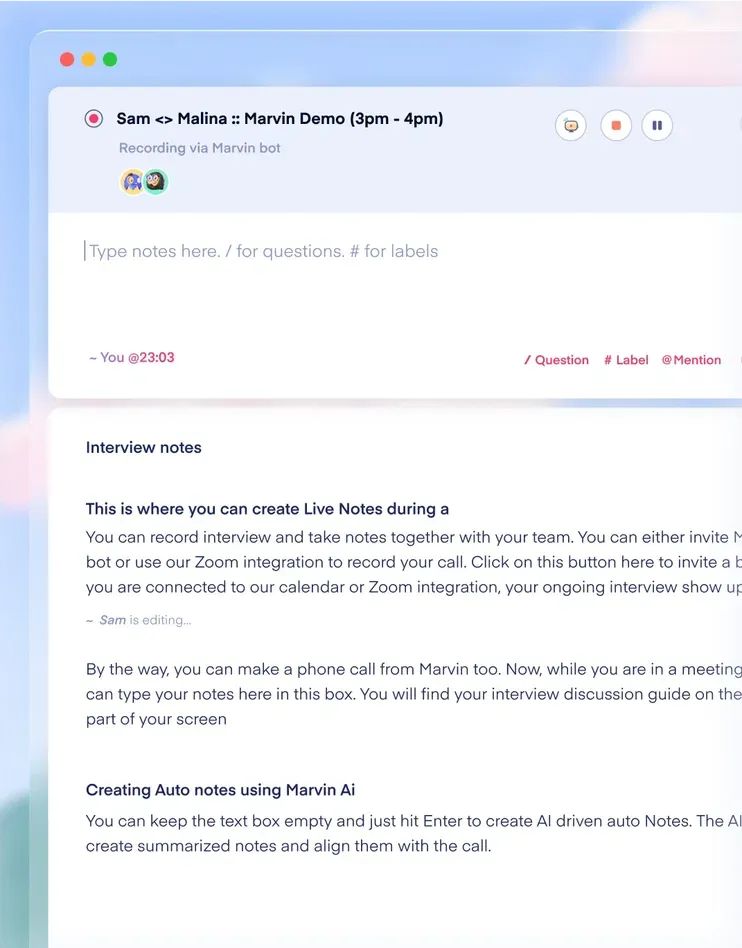

Option 2: Use a full-stack AI research platform

This option is best if you want speed, rigor, and traceability, but don’t want to maintain models. It’s an “all-in-one lab” approach where:

- You upload everything (interviews, surveys, support tickets, etc.) to a single platform.

- The platform supports transcription, AI tagging, theme clustering, and insight tracking.

- You can complete cross-project research with all the integrations you need (Zoom, Slack, survey/interview tools, CRM, product analytics, etc.).

A single AI customer experience feedback platform gives you access to pre-defined infrastructure. You can customize it to some extent, but you’ll need far fewer resources to run it. And it frees you to focus exclusively on insight analysis and decisions.

If you’re ready to see what this looks like in practice, create a free account with HeyMarvin. Start analyzing real feedback in minutes and enjoy the benefits of AI-powered deep research.

How to choose the right AI tool for customer feedback

With so many AI tools to consider, it’s easy to get stuck comparing features. But it’s more helpful to focus on workflows.

How does the tool handle data?

Who on your team can access insights and how easily can they explore them?

How easy is it for non-researchers to navigate and use the platform?

If you can picture that tool fitting your way of work, here’s what else to consider:

1. Map your feedback sources

Formatting and uploading CSVs are among the biggest headaches in research analysis. You want to avoid it, which is why you must clarify:

- Where your feedback lives: NPS tools, support tickets, app reviews, transcripts, Slack threads, or beta feedback forms.

- Whether the tool can easily ingest all your feedback sources.

2. Check how it handles open-ended text

Different AI tools rely on different models, prompting strategies, or analysis pipelines. To determine if a particular tool is strong at interpreting feedback, test it with messy input:

“After the last update, I can’t find half the settings, the app feels slower on Android, and support just sent me a generic reply.”

The last thing you want is to see the AI lumping everything under “negative feedback.” A strong tool should clearly separate the signals in the feedback (navigation or discoverability issues, Android performance degradation, support dissatisfaction).

3. Evaluate transparency

If you cannot trace insight back to raw data, you cannot defend it in front of stakeholders. Traceability is non-negotiable, and you shouldn’t have to settle for generic findings (“Navigation friction is a top theme.”).

For each finding, the tool should provide access to source quotes, mention stats, and segment or time-range filters.

4. Check integrations and insight sharing

Findings should be easy to access and incorporated into everyday decision-making. You don’t want them to sit in reports nobody looks at.

Ask yourself:

- Does it integrate with the systems your teams already use (Slack, Jira, Notion, Miro, Figma, CRM tools, product analytics)?

- Can you easily link user insights to features, tickets, or roadmap items?

- Can teams access findings directly instead of sharing screenshots or copying quotes?

5. Assess scale vs control

Some tools are black boxes. You upload your data and press a button, and they return themes or summaries. You don’t get much visibility into how the system reached those conclusions.

Others give you more control. You can adjust prompts, define categories, build custom taxonomies, or refine how to tag and cluster feedback.

In research workflows, transparency and control usually matter more than speed alone.

If you have strong opinions about your research process, flexibility will be a priority. Therefore, you’ll want the ability to shape how insights are generated and organized.

But if your priorities are speed and automation, you might settle for a deeper built-in analysis.

6. Check data privacy and security

Users may share confidential information such as names, emails, company or product information, and even health or financial details.

Depending on your users’ location or the industry you operate in, some regulatory requirements may apply. You may need GDPR compliance, SOC 2 certification, HIPAA support, or data processing agreements. So specifically check how the AI tools handle your data.

- Is it used to train their models?

- Can you opt out of model training?

- Where and for how long do they store your data?

And do not forget about access controls.

- Can you restrict who sees raw transcripts or set role-based permissions?

- Is there SSO support?

These controls ensure sensitive customer information stays protected, while still allowing teams to access the insights they need.

Common challenges in AI customer feedback (and how to address them)

When using AI for customer feedback analysis, most challenges stem from messy data, poor setup, and over- or under-trust in AI automation.

Below are the most common ones, along with their implications and suggestions for addressing them.

Frequently asked questions (FAQs)

Here’s what else you might want to know about AI customer feedback implementation:

How accurate is AI customer feedback analysis?

With clean input and strong clustering models, AI can reliably run thematic analysis and detect trends at scale. It may be less reliable with sarcasm, short answers, or niche domain language. But even for these instances, you can improve accuracy by validating top themes with raw quotes and real user segments.

Can AI detect sentiment in customer feedback?

Yes. AI can detect tone and emotional signals beyond simple positive or negative scoring. It analyzes word patterns, intensity, and context, often identifying frustration, confusion, delight, urgency, or skepticism. Still, consider reviewing edge cases where the tone is subtle or sarcastic.

What types of customer Feedback can AI analyze best?

AI works best with large datasets, especially with text feedback. Some of the most suitable sources you can feed it for pattern recognition are:

- NPS open-ended responses

- Support tickets

- Surveys

- Interview transcripts

- App store reviews

Is AI customer feedback secure for Enterprise teams?

It can be secure if the platform is built for enterprise use. Security depends on compliance standards, data handling, and access controls. Look for GDPR alignment, SOC 2 certification, role-based permissions, and clear data retention policies.

Getting started

When you centralize insights, accelerate synthesis, and make evidence easy to trace, you design better products. You can build faster, with fewer debates, and with far less spreadsheet fatigue.

If you want that without constantly switching between tools, start with HeyMarvin.

Our AI-native customer insights platform is built for researchers, designers, and product teams who truly build on customer feedback. It turns scattered interviews, surveys, tickets, and notes into a living system that gets smarter over time.

With HeyMarvin, you can:

- Centralize all research in one searchable repository

- Auto-transcribe and take time-stamped AI notes during interviews

- Detect themes, emotions, and trends across thousands of responses

- Run deep qualitative analysis with nested tagging hierarchies

- Ask questions in plain English and get answers grounded in cited evidence

- Share insights easily with product, design, and leadership teams

- Work with enterprise-ready security and governance

Teams at companies such as Microsoft, Entertainment Partners, and Included Health use it to scale research without sacrificing rigor.

Ready to turn feedback into a system your whole team can trust? Book a demo and see HeyMarvin in action.

See Marvin AI in action

Want to spend less time on logistics and more on strategy? Book a free, personalized demo now!

.svg)