The Importance of Qualitative Research within AI Systems: Takeaways from a Leading Researcher

Award-winning qualitative researcher Mary L. Gray shares the principles for building digital experiences that transform everyone's lives for the better.

Artificial intelligence is everywhere. Google Maps uses AI to recommend your best route to work. Self-driving cars will eliminate the hassle of dealing with appalling drivers on your way there. You may have already heard of robots assisting in life-saving surgery.

But how are we continuously testing and evaluating AI for accuracy and reliability?

“If we’re going to start relying on AI systems, we’re going to start expecting more and keep ramping up what we expect,” said Mary L. Gray, an author and prominent voice in the ethical use of technology.

As Senior Principal Researcher at Microsoft, Mary chairs the company’s one-of-a-kind Research Ethics Review Program. She views technology through a social scientist’s lens. The increasing importance of qualitative research to AI is crystal clear to her:

“Humanity deserves the attention that UX research has to offer. It’s core to anything we do that’s meaningful.”

Mary shared her insights about the current state of the AI industry, the challenges that researchers face and a few solutions of her own.

Status Quo of Qualitative Research in AI Systems

“A qualitative understanding of the problem is the best way to set up the use of a dataset to model a decision,” Mary said.

Alas, peers in computer science and engineering (CS&E) aren’t programmed to think that way — there’s a chasm between the CS&E and social science schools of thought.

Building a technical system involves creating mathematical models to be tested. These models isolate variables and throw content and context (the “meat and potatoes” of UX work) out the window.

“We’re still at that point where CS&E don’t see human computer interaction (HCI) as solving hard problems,” Mary said.

She isn’t wrong. Most AI firms haven’t fully embraced the role of researchers and given importance to qualitative, social science research.

“The elephant in the room (is) that CS&E have mostly treated those pieces of the build as ‘nice to have’,” she said.

Mary argued that the involvement of subject experts from various fields fosters product innovation. Rather than stumbling their way to and chancing upon innovative ideas, teams tackle the question, “what problem are we solving?” with surgical precision.

A number of AI companies neglect to hire enough qualitative researchers to make an impact. Others don’t even bother. Some, however, have recognized the need to involve specialists in their research. Mary alluded to Sentient Machines and OpenAI:

“They’ve employed linguists, folks on their team thinking about language models (and) annotating training data. There is an absolute demand in different moments for developing models that involve a range of experts.”

According to Mary, no one is better poised than qualitative researchers for collaboration among specialists. “UX researchers are in the best position to manage the relationships and data collection.”

She encouraged further convergence of the social sciences and research.

“(My) hope is that the frameworks and methodologies familiar to anthropology (and) qualitative sociology that tend to stay really siloed in those disciplines, start informing HCI and other design approaches in a really rigorous way.”

Oh, the Humanity of AI Innovation

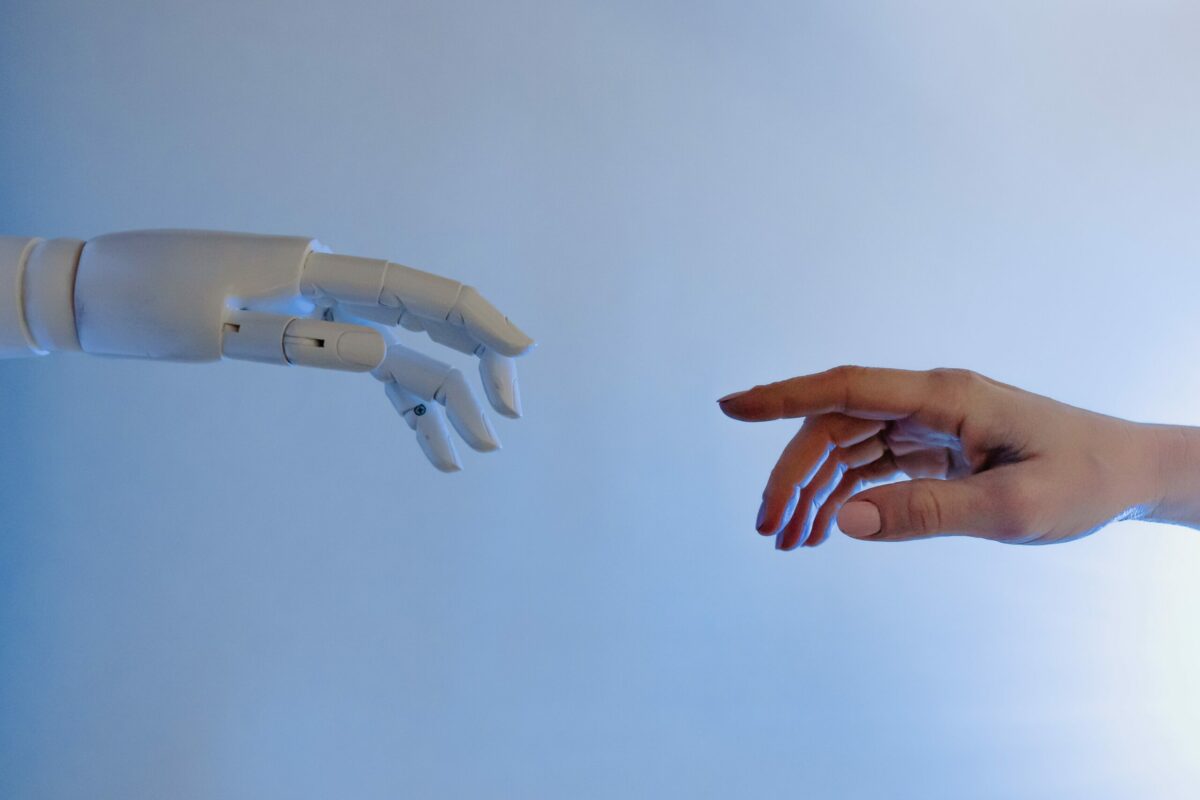

AI and machine learning rely on vast amounts of training data.

“There’s a belief that AI innovation hinges on how much data you have access to,” Mary said. “(It’s) the specialization of AI that distinguishes it, not the generalizability. For an AI system to offer anything distinct or useful to a human, (it) has to be working with higher quality data.”

In these cases, data is useless in its raw state. It has to be annotated or labeled before any meaning can be extracted from it. Human intervention is key.

Deloitte’s European Workforce survey illustrates the importance of human interpretation to machine learning. The authors conducted a sentiment analysis of a single qualitative, open-ended question. A machine algorithm was pitted against a three-person research team, with the task of analyzing responses by categorizing them into themes to garner overall employee sentiment. The analysis is broken down step-by-step, showing us at each stage the output of human vs. machine.

Compared to human researchers, the machine completed said task in a very short time. However, its word categorizations made little sense. The algorithm performed poorly in comprehending words together as phrases and reading between the lines. The researchers spent time understanding the responses, coming up with meaningful categories that captured the tone and sentiment of employee responses. Clearly, human capabilities will continue to be essential in the continuous improvement of AI technology. [Technically, we could’ve used AI to transform the transcript from Mary’s interview into a quick summary, but wouldn’t you much rather read a human-crafted blog instead?]

The authors also touch on the role of machines in AI as either a tool, assistant, peer or manager (in ascending order of autonomy). Along these lines, Mary was keen to point out that technology is a medium or facilitator in performing tasks:

“Tech can’t fix anything. It can assist us in addressing the needs we have in the world. It’s never a solution. It makes us think about possibilities.”

Whether you’re filling out an online employee survey, impulse shopping on Instagram or scrolling through your Twitter feed, every tech ecosystem has social moments. These likely can’t be reduced to a data point, but are worth more probing and exploration.

“Technical systems are part of the fabric of our social lives (and) interactions. You don’t fix or solve socialness. You just do it,” Mary said.

It’s important to understand that there are people or objects behind these data points. Until we fully understand people and their behavioral quirks (that’ll never happen), research should never cease.

“Think about how much we’re going to need UX research because we’re talking about our social lives. People are not predictable. We’re pretty whimsical.”

AI systems and the people they serve

Plenty of evidence exists that current systems aren’t equitable for everyone. Twitter’s now defunct ethics team (thanks, Elon!) uncovered racial bias in a photo-cropping algorithm, and also revealed that right-leaning news sources were promoted more than left-leaning ones.

Twitter isn’t alone. Apple’s credit card algorithm and Amazon’s automated résumé screening both discriminated against women. Software used by leading hospitals in the US discriminated against black people on kidney transplant lists.

AI is dependent on training data — a human input. And humans are, well, human. We all have biases, whether we exhibit them or not. These biases are reflected in training data, baked into algorithms and can marginalize certain demographics.

Mary cited an example of public concern and criticism surrounding Microsoft, “Facial recognition systems were clearly failing specific members of society. It’s important for companies and practitioners to start on a road of thinking about responsible AI.”

She has no doubt who companies are obligated to: “If we think technology is going to save the day, and we aren’t looking at the people who actually carry out services in communities, particularly for community members who are most vulnerable, we are completely missing the opportunity economically, socially to make a difference.”

AI is a relatively new frontier that has enjoyed a largely unregulated environment. However, governments and lawmakers are beginning to introduce the regulation of AI.

The EU published a whitepaper in 2020, recognizing that regulation is essential to development of AI tools that people can trust.

This is a decent start, but Mary would like to see regulatory authorities put the systems under the microscope.

“We want to approach any of these systems from the assumption that (anybody) can build a system. A system that can be vetted, audited. Ongoing auditing that’s meaningful, not just a moment-in-time stamp to say it was fair when it went out the door. That’s necessary, but not sufficient.”

Changing the Mindset of Qualitative Research

Today, jobs for AI Ethicists list a computer science degree as a prerequisite. An education in CS&E skews candidates toward thinking that everything is explained by a mathematical abstraction. If AI Ethicists are trained in the values of CS&E, will they be able to objectively identify flaws in CS&E systems?

Shifting the mindset involves rewiring the way computer scientists and engineers think and act, fully understanding the implications of what they are building. So how does the mindset change within the research community? How would they kick off the process?

Begin with the 10-12 educational institutions that act as the assembly line of workers for large tech companies. Mary encouraged adoption of Barbara Grosz’ approach — embed ethics into computer science programs to get students to think about their responsibilities as technologists.

“You have to interact with people who are going to carry the benefits and risks of a system you’re building. If that was something you learned from day one, throughout undergrad, (as) part of every boot camp — how do we hold ourselves accountable? Make it impossible to graduate without asking basic questions before you put anything into the world.”

Mary is adamant that the responsibility must not exist at the individual level, but a collective level. Practitioners should be of the mindset that they do not represent company A or B, but the industry itself.

“It gives us a path of addressing that and finding how to make amends as a set of practitioners, instead of turning it into a case of a barrel of apples with one bad one in there,” Mary said. “That’s not ethics — that’s politics. Ethics is when we hold ourselves accountable and each other to a standard that’s actively being debated.”

Qualitative research: The key to more ethical AI

Harvard social scientist Gary King reshaped our understanding of the research paradigm, “All research is qualitative; some is also quantitative.” Every study can be traced back to a single, qualitative question.

Qualitative research aims to capture the complexity of human nature. At the end of the day, technical systems are designed for and woven into human lives to enhance our existence. From résumé screening to facial recognition, humans must be the center of the thought process — Mary even suggests we change the well-known acronym HCI from ‘human computer interaction’ to ‘human-centered innovation’. While building such elaborate systems, researchers must constantly circle back to the end user. Who are we obligated to? What problem of theirs are we trying to solve? Are all demographics represented equally? That’s human-centered innovation.

Qualitative researchers will always be an essential cog in the research machine.

“Qualitative research is more important than ever,” Mary said. “AI does not replace it; in fact, it will increasingly need it.”

Want to hear more? Catch the full interview with Mary here.

Photo by Tara Winstead.Tara Winstead.

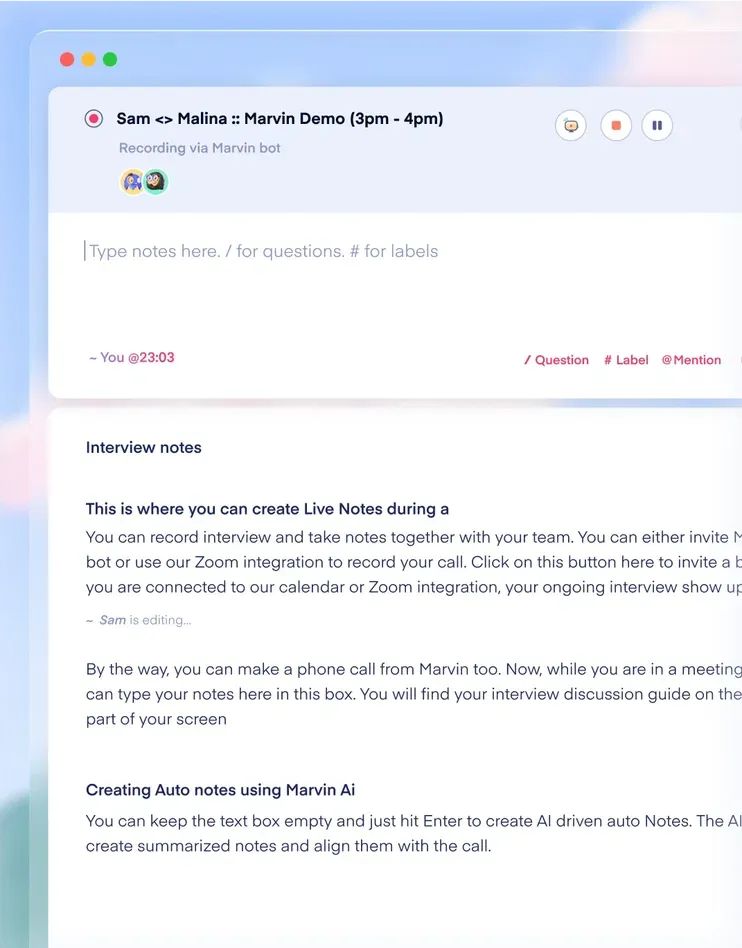

See Marvin AI in action

Want to spend less time on logistics and more on strategy? Book a free, personalized demo now!

.svg)